To place an automated call, customers need to define what questions they want answered. The challenge: calls vary enormously in complexity, customers are non-technical ops teams who don't have time for involved setup, and whatever we built also needed to be legible to the AI that would actually conduct the call.

I explored three fundamentally different approaches before converging on a solution.

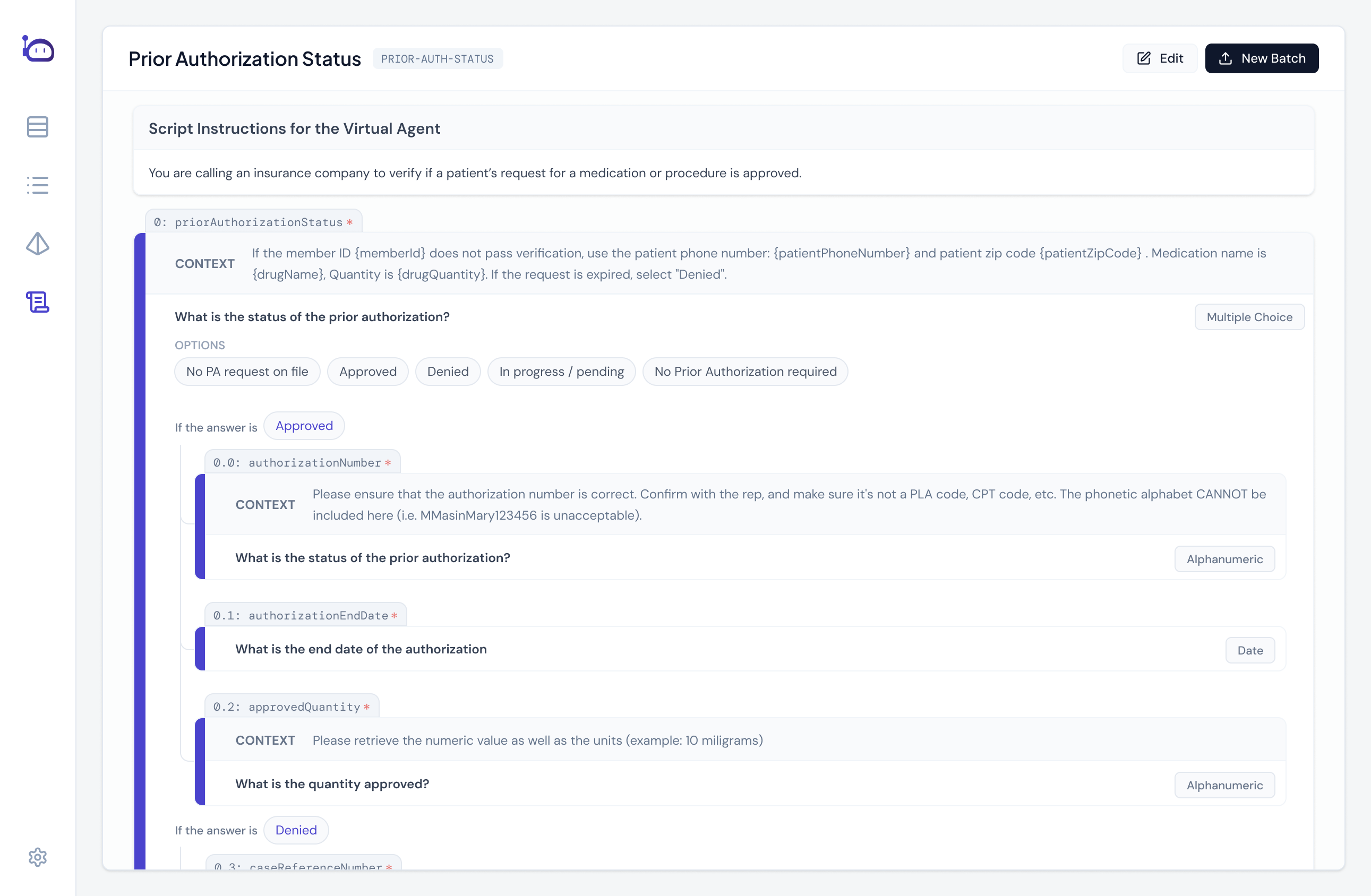

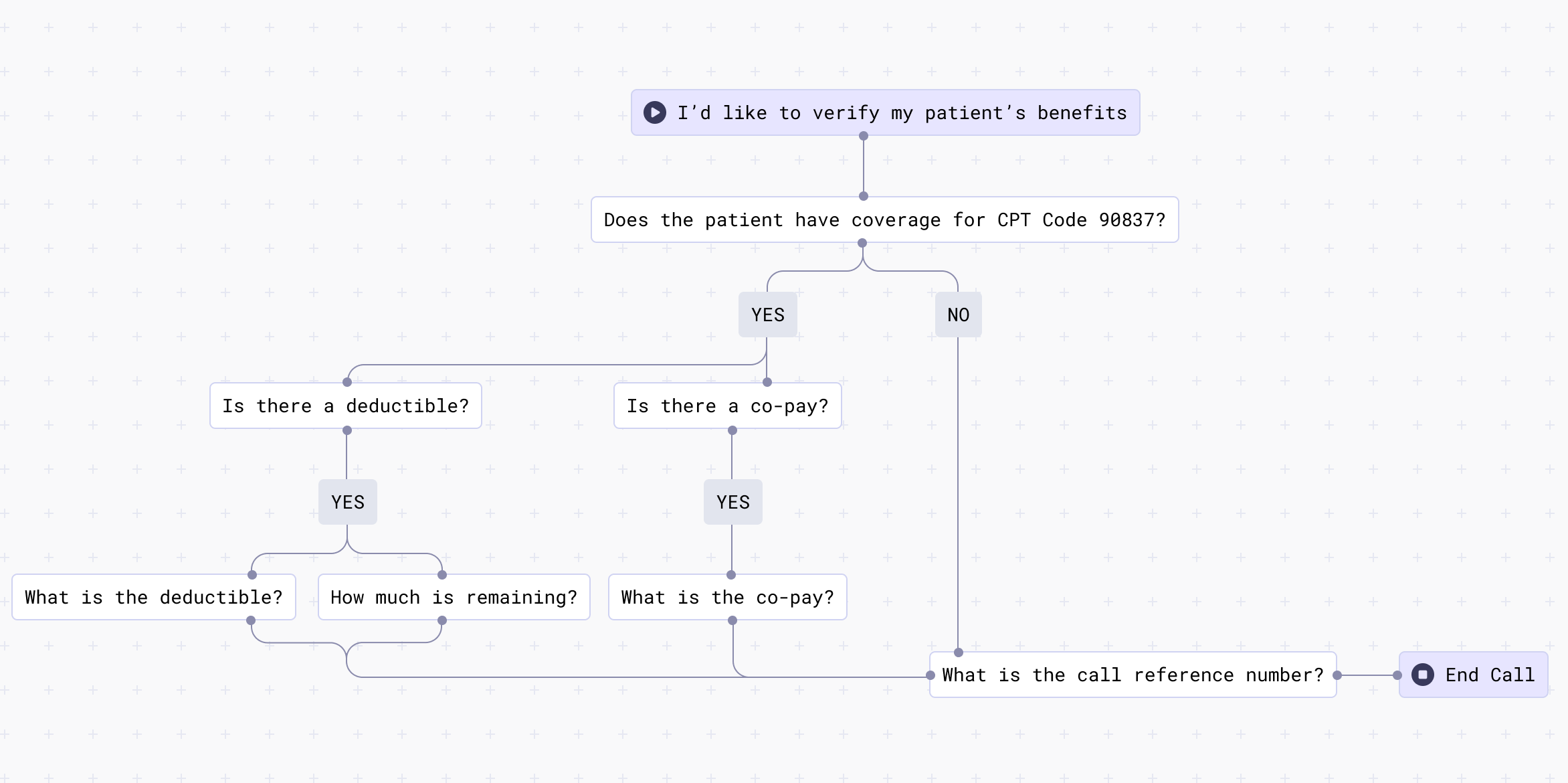

The first was a node-based decision model that powerful, capable of handling highly complex branching logic, and... immediately too much. Ops teams flagged it would require dedicated support to implement, and the complex decision trees would actually constrain the AI when calls deviated from script. Verdict? Over-engineered.

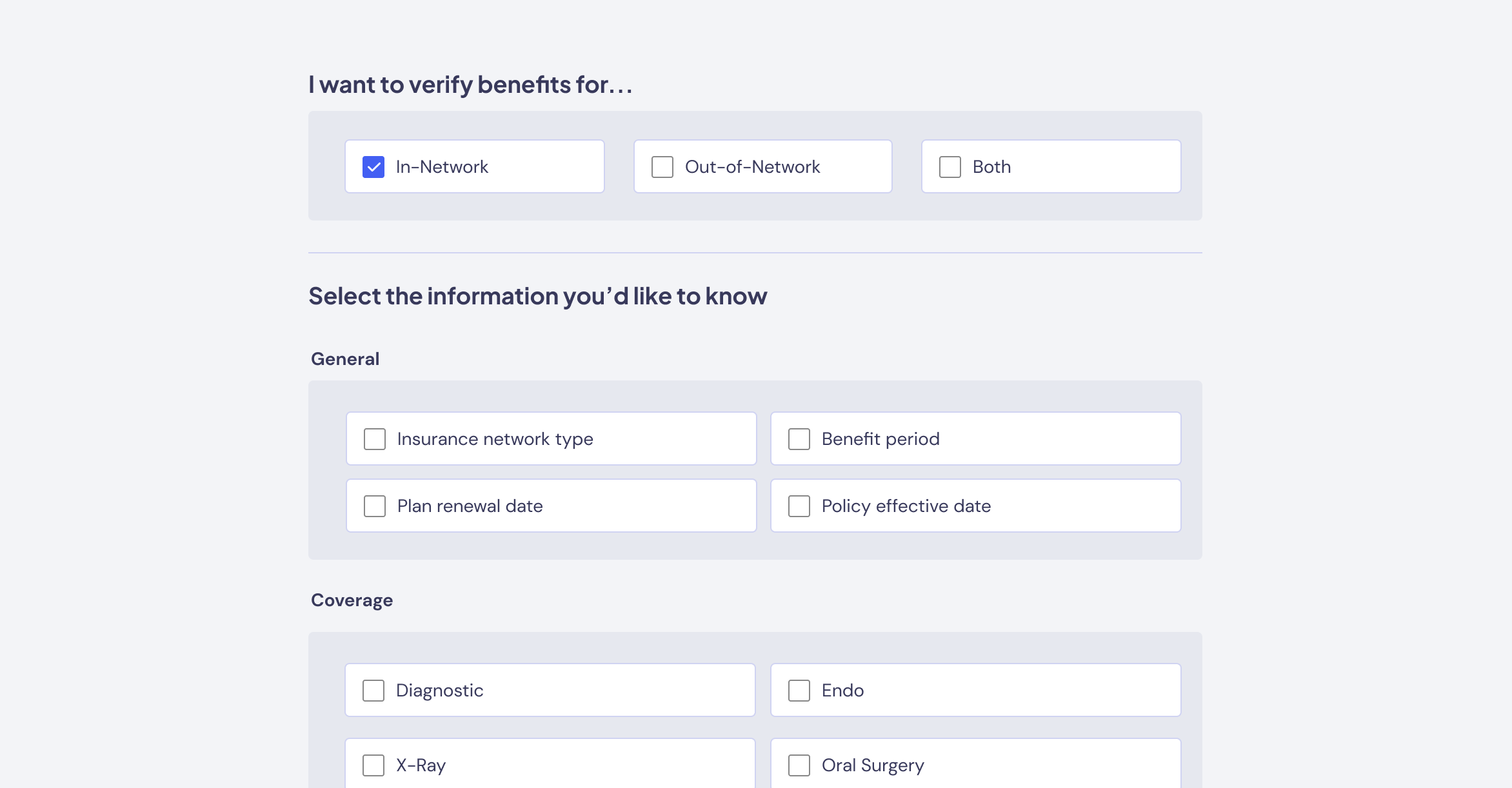

The second flipped the approach entirely: users would only select what information they wanted captured, and the system would generate the questions internally. Cleaner for engineering, better for AI consistency — but customers hated losing visibility into what was actually being asked. Verdict? Too opaque.

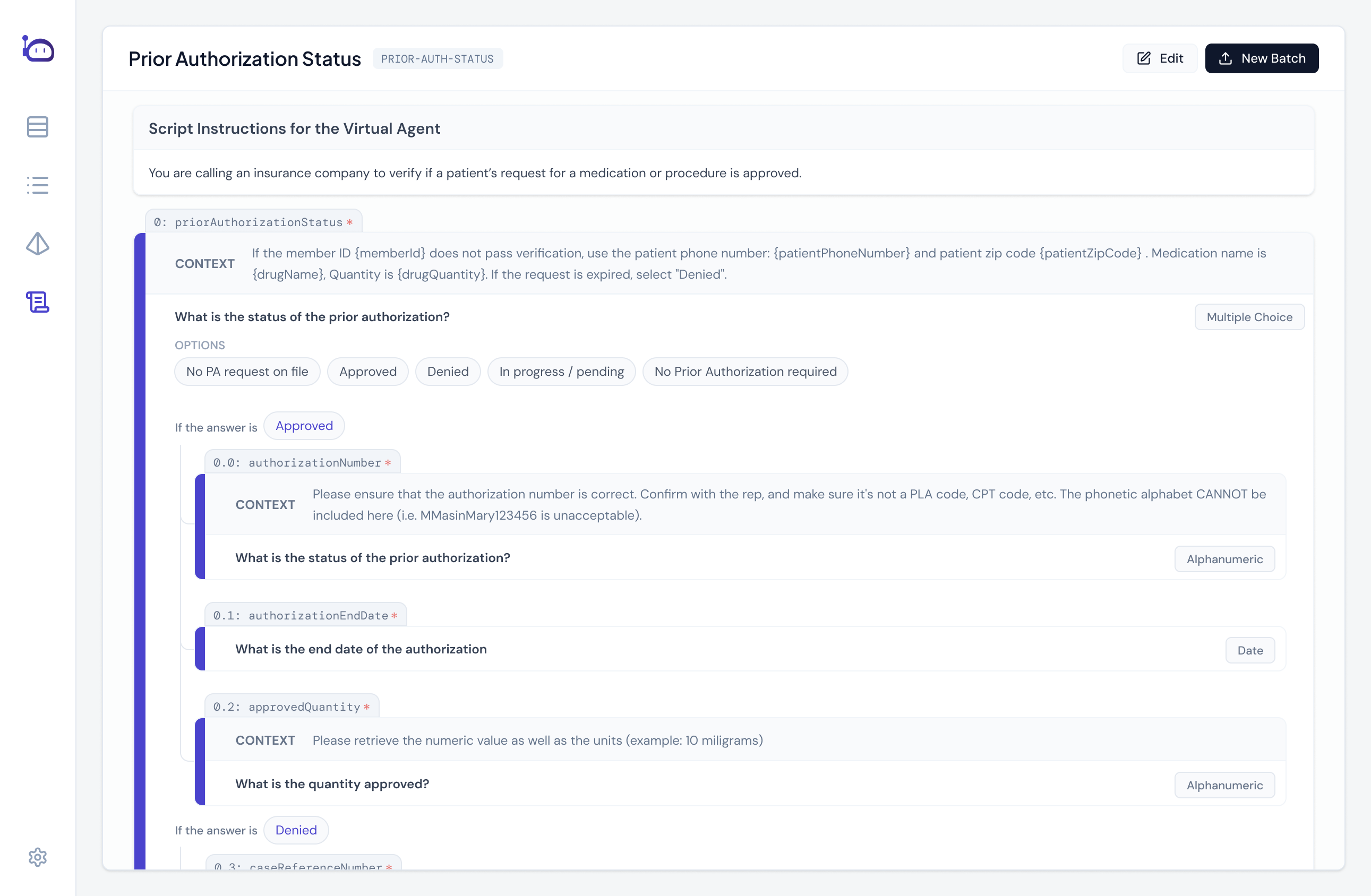

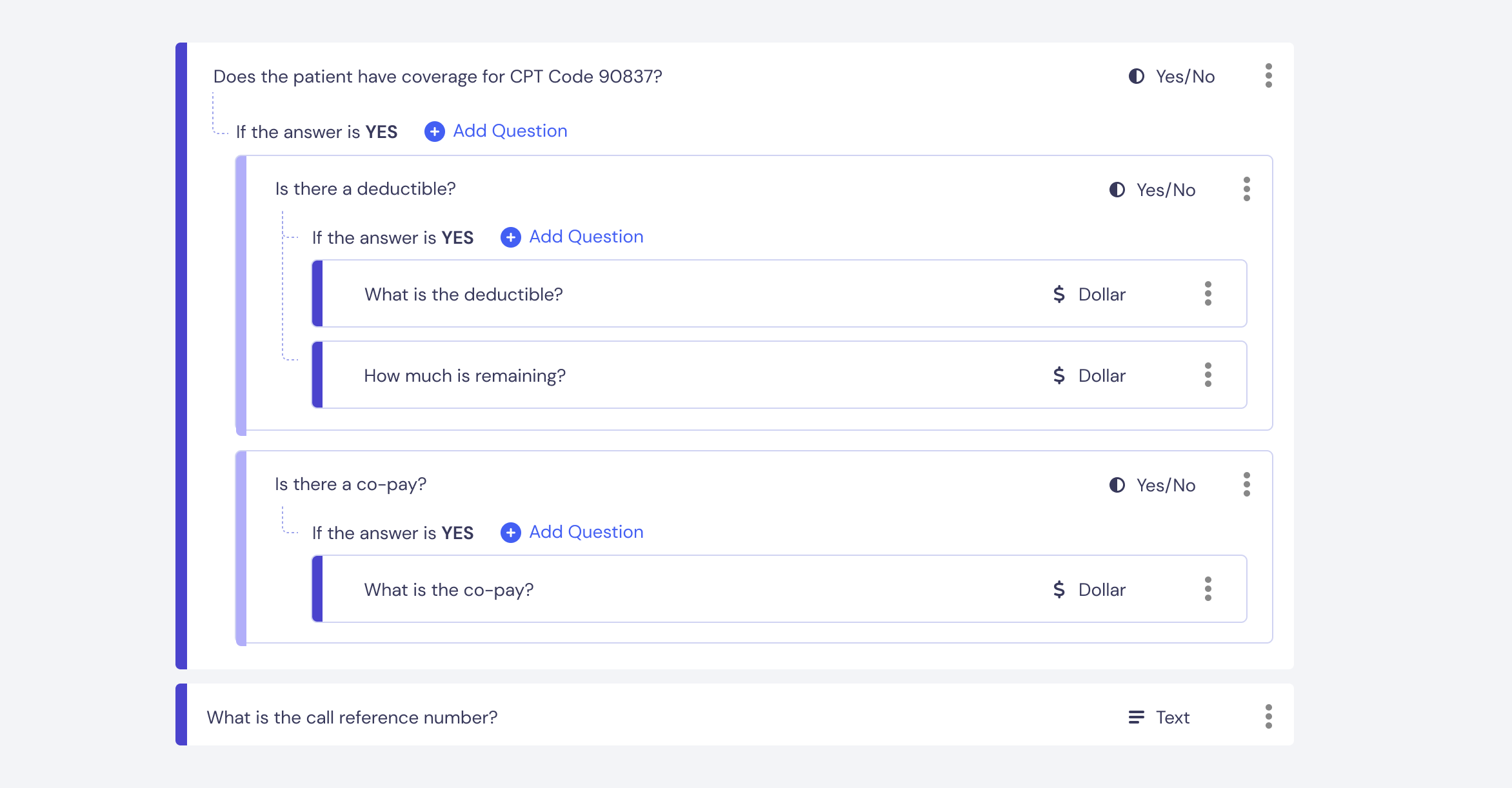

The third direction split the difference. A form-based Script Builder (familiar to anyone who's used Google Forms) that let teams define what questions to ask while keeping the interaction model simple. Enough structure for consistent data capture, flexible enough for different operational styles, and interpretable enough for the AI.

We implemented v1 of our Script Builder in Fall 2023 and have continued iterating since. The Script Builder evolves as we gain greater clarity on use cases - for example, we've found that many customers want similar scripts, so we've created template "base" scripts that get you 70% of the way there. Our core design didn't box us into a corner, which has allowed us to easily adapt to changes (and there were MANY changes). The Script Builder isn't some ground-breaking feature, but it manages to suit the complex needs of our customers while being easy to understand, and I'm quite happy with that.